Azure Files Identity based authentication -Active Directory Domain Services (ADDS)

Azure Files supports identity-based authentication over Server Message Block (SMB) through on-premises Active Directory Domain Services (AD DS) and Azure Active Directory Domain Services (Azure AD DS).

This article focus on how to enable identity based authentication on Azure Files over SMB through on-premises active directory domain services.

Why Identity based authentication is important ?

What you need to know before get start

- On-premises Active Directory mush be Sync to Azure AD

- Supports Kerberos authentication with AD with RC4-HMAC and AES 256 encryption. AES 256 encryption support is currently limited to storage accounts with names <= 15 characters in length. AES 128 Kerberos encryption is not yet supported.

- Supports only Windows 7 & Windows 2008 R2 above

- Supports only against the AD forest, storage account registered to

- Does not support authentication against computer accounts created in AD DS.

- Does not support authentication against Network File System (NFS) file shares

Steps to be followed

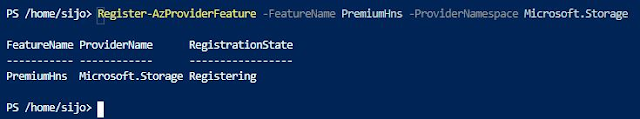

Part one: enable AD DS authentication on your storage account

Part two: assign access permissions for a share to the Azure AD identity (a user, group, or service principal) that is in sync with the target AD identity

Part three: configure Windows ACLs over SMB for directories and files

Administrator with full access to the share can mount the share into a domain joined computer and set necessary NTFS permissions using the following.

Configure Windows ACLs with Windows File Explorer or icals

Ex: icacls <mounted-drive-letter>: /grant <user-email>:(f)

The

following permissions are included on the root directory of a file share:

·

BUILTIN\Administrators:(OI)(CI)(F)

·

BUILTIN\Users:(RX)

·

BUILTIN\Users:(OI)(CI)(IO)(GR,GE)

·

NT

AUTHORITY\Authenticated Users:(OI)(CI)(M)

·

NT

AUTHORITY\SYSTEM:(OI)(CI)(F)

·

NT

AUTHORITY\SYSTEM:(F)

· CREATOR OWNER:(OI)(CI)(IO)(F)

Note: Port 445 should be allowed in nsg/firewall rules

Similarly if you want to give read access to a group/users into Azure File Share assign them with the below role

- Storage File Data SMB Share Reader allows read access in Azure Storage file shares over SMB.

Part four: mount an Azure file share to a VM joined to your AD DS

User can access the share by calling the share UNC path from run command box without entering the password.

User can also access the share by calling the share UNC path from file explorer without entering the password.

Most convenient way is to MAP the share as a drive into the file explorer as shown below.

Note: Ultimate access to the Shares will be based on the permissions applied from Azure file share IAM and more granular control applied on the windows ACL/ NTFS Permission to the subfolders and directories.

Part 5 Update the password of your storage account identity in AD DS

https://docs.microsoft.com/en-gb/azure/storage/files/storage-files-identity-ad-ds-update-password

If you registered the Active Directory Domain Services (AD DS) identity/account that represents your storage account in an organizational unit or domain that enforces password expiration time, you must change the password before the maximum password age. Your organization may run automated clean-up scripts that delete accounts once their password expires. Because of this, if you do not change your password before it expires, your account could be deleted, which will cause you to lose access to your Azure file share